Categories

Archives

In September 2022, IPTC Managing Director Brendan Quinn was invited to attend a workshop at the Royal Society in London, held in conjunction with the BBC. It was convened to discuss concerns about content provenance, threats to society due to misinformation and disinformation, and the idea of a “public-service internet.”

A note summarising the outcomes of the meeting has now been published. The Royal Society says, “This note provides a summary of workshop discussions exploring the potential of digital content provenance and a ‘public service internet’.”

The workshop note gives a summary of key takeaways from the event:

- Digital content provenance is an imperfect and limited – yet still critically important – solution to the challenge of AI-generated misinformation.

- A provenance-establishing system that can account for the international and culturally diverse nature of misinformation is essential for its efficacy.

- Digital content provenance tools present significant technical and ethical challenges, including risks related

to privacy, security and literacy. - Understanding how best to embed ideas such as digital content provenance into counter-misinformation strategies may require revisiting the rules which dictate how information is transmitted over the internet.

- A ‘public service internet’ presents an interesting and new angle through which public service objectives can shape the information environment; however, the end state of such a system requires greater clarity and should include a wide range of voices, including historically excluded groups.

The IPTC is already participating in several projects looking at concrete responses to the problems of misinformation and disinformation in the media, via our work theme on Trust and Credibility in the Media. We are on the Steering Committee of Project Origin, and work closely with C2PA and the Content Authenticity Initiative.

The IPTC looks forward to further work in this area.The IPTC and its members will be happy to contribute to more workshops and studies by the Royal Society and other groups.

In partnership with the IPTC, the PLUS Coalition has published for public comment a draft on proposed revisions to the PLUS License Data Format standard. The changes cover a proposed standard for expressing image data mining permissions, constraints and prohibitions. This includes declaring in image files whether an image can be used as part of a training data set used to train a generative AI model.

Review the draft revisions in this read-only Google doc, which includes a link to a form for leaving comments.

Here is a summary of the new property:

| XMP Property | plus:DataMining |

| XMP Value Type | URL |

| XMP Category | External |

| Namespace URI | http://ns.useplus.org/ldf/xmp/1.0/DataMining |

| Comments |

|

| Cardinality | 0..1 |

According to the PLUS proposal, the value of the property would be a value from the following controlled vocabulary:

| CV Term URI | Description |

| http://ns.useplus.org/ldf/vocab/DMI-UNSPECIFIED | Neither allowed nor prohibited |

| http://ns.useplus.org/ldf/vocab/DMI-ALLOWED | Allowed |

| http://ns.useplus.org/ldf/vocab/DMI-ALLOWED-noaitraining | Allowed except for AI/ML training |

| http://ns.useplus.org/ldf/vocab/DMI-ALLOWED-noaigentraining | Allowed except for AI/ML generative training |

| http://ns.useplus.org/ldf/vocab/DMI-PROHIBITED | Prohibited |

| http://ns.useplus.org/ldf/vocab/DMI-SEE-constraint | Allowed with constraints expressed in Other Constraints property |

| http://ns.useplus.org/ldf/vocab/DMI-SEE-embeddedrightsexpr | Allowed with constraints expressed in IPTC Embedded Encoded Rights Expression property |

|

Allowed with constraints expressed in IPTC Linked Encoded Rights Expression property |

The public comment period will close on July 20, 2023.

If it is accepted by the PLUS membership and published as a PLUS property, the IPTC Photo Metadata Working Group plans to adopt this new property into a new version of the IPTC Photo Metadata Standard at the IPTC Autumn Meeting in October 2023.

CIPA, the Camera and Imaging Products Association based in Japan, has released version 3.0 of the Exif standard for camera data.

The new specification, “CIPA DC-008-Translation-2023 Exchangeable image file format for digital still cameras: Exif Version 3.0” can be downloaded from https://www.cipa.jp/std/documents/download_e.html?DC-008-Translation-2023-E.

Version 1.0 of Exif was released in 1995. The previous revision, 2.32, was released in 2019. The new version introduces some major changes so the creators felt it was necessary to increment the major version number.

Fully internationalised text tags

In previous versions, text-based fields such as Copyright and Artist were required to be in ASCII format, meaning that it was impossible to express many non-English words in Exif tags. (In practice, many software packages simply ignored this advice and used other character sets anyway, violating the specification.)

In Exif 3.0, a new datatype “UTF-8” is introduced, meaning that the same field can now support internationalised character sets, from Chinese to Arabic and Persian.

Unique IDs

The definition of the ImageUniqueID tag has been updated to more clearly specify what type of ID can be used, when it should be updated (never!), and to suggest an algorithm:

This tag indicates an identifier assigned uniquely to each image. It shall be recorded as an ASCII string in hexadecimal notation equivalent to 128-bit fixed length UUID compliant with ISO/IEC 9834-8. The UUID shall be UUID Version 1 or Version 4, and UUID Version 4 is recommended. This ID shall be assigned at the time of shooting image, and the recorded ID shall not be updated or erased by any subsequent editing.

Guidance on when and how tag values can be modified or removed

Exif 3.0 adds a new appendix, Annex H, “Guidelines for Handling Tag Information in Post-processing by Application Software”, which groups metadata into categories such as “structure-related metadata” and “shooting condition-related metadata”. It also classifies metadata in groups based on when they should be modified or deleted, if ever.

|

Category |

Description |

Examples (list may not be exhaustive) |

|

Update 0 |

Shall be updated with image structure change |

DateTime (should be updated with every edit), ImageWidth, Compression, BitsPerSample |

|

Update 1 |

Can be updated regardless of image structure change |

ImageDescription, Software, Artist, Copyright, UserComment, ImageTitle, ImageEditor, ImageEditingSoftware, MetadataEditingSoftware |

|

Freeze 0 |

Shall not be deleted/updated at any time |

ImageUniqueID |

|

Freeze 1 |

Can be deleted in special cases |

Make, Model, BodySerialNumber |

|

Freeze 2 |

Can be corrected [if wrong], added [if empty] or deleted [in special cases] |

DateTimeOriginal, DateTimeDigitized, GPSLatitude, GPSLongitude, LensSpecification, Humidity |

Collaboration between CIPA and IPTC

CIPA and IPTC representatives meet regularly to discuss issues that are relevant to both organisations. During these meetings IPTC has contributed suggestions to the Exif project, particularly around internationalised fields and unique IDs.

We are very happy for our friends at CIPA for reaching this milestone, and hope to continue collaborating in the future.

Developers of photo management software understand that values of Exif tags and IPTC Photo Metadata properties with a similar purpose should be synchronised, but sometimes it wasn’t clear exactly which properties should be aligned. IPTC and CIPA collaborated to create a Mapping Guideline to help software developers implement it properly. Most professional photo software now supports these mappings.

Complete list of changes in Exif 3.0

The full set of changes in Exif 3.0 are as follows (taken from the history section of the PDF document):

- Added Tag Type of UTF-8 as Exif specific tag type.

- Enabled to select UTF-8 character string in existing ASCII-type tags

- Enabled APP11 Marker Segment to store a Box-structured data compliant with the JPEG System standard

- Added definition of Box-structured Annotation Data

- Added and changed the following tags:

- Added Title Tag

- Added Photographer Information related Tags (Photographer and ImageEditor)

- Added Software Information related Tags (CameraFirmware, RAWDevelopingSoftware, ImageEditingSoftware, and MetadataEditingSoftware)

- Changed Software, Artist, and ImageUniqueID

- Corrected incorrect definition of GPSAltitudeRef

- GPSMeasureMode tag became to support positioning information obtained from GNSS in addition to GPS

- Changed the description support levels of the following tags:

- XResolution

- YResolution

- ResolutionUnit

- FlashpixVersion

- Discarded Annex E.3 to specify Application Software Guidelines

- Added Annex H. (at the time of publication) to specify Guidelines for Handling Tag Information in Post-processing by Application Software

- Added Annex I.and J. (both at the time of publication) for supplemental information of Annotation Data

- Added Annex K. (at the time of publication) to specify Original Preservation Image

- Corrected errors, typos and omissions accumulated up to this edition

- Restructured and revised the entire document structure and style

The IPTC is very happy to announce that it has joined the Steering Committee of Project Origin, one of the industry’s key initiatives to fight misinformation online through the use of tamper-evident metadata embedded in media files.

After working with Project Origin over a number of years, and co-hosting a series of workshops during 2022, the organisation formally invited the IPTC to join the Steering Committee.

Current Steering Committee members are Microsoft, the BBC and CBC / Radio Canada. The New York Times also participates in Steering Committee meetings through its Research & Development department.

“We were very happy to co-host with Project Origin a productive series of webinars and workshops during 2022, introducing the details of C2PA technology to the news and media industry and discussing the remaining issues to drive wider adoption,” says Brendan Quinn, Managing Director of the IPTC.

C2PA, the Coalition for Content Provenance and Authenticity, took a set of requirements from both Project Origin and the Content Authenticity Initiative to create a technical means of associating media files with information on the origin and subsequent modifications of news stories and other media content.

“Project Origin’s aim is to take the ground-breaking technical specification created by C2PA and make it realistic and relevant for newsrooms around the world,” Quinn said. “This is very much in keeping with the IPTC’s mission to help media organisations to succeed by sharing best practices, creating open standards and facilitating collaboration between media and technology organisations.”

“The IPTC is a perfect partner for Project Origin as we work to connect newsrooms through secure metadata,” said Bruce MacCormack, the CBC/Radio-Canada Co-Lead.

The announcement was made at the Trusted News Initiative event held in London today, 30 March 2023, where representatives of the BBC, AFP, Microsoft, Meta and many others gathered to discuss trust, misinformation and authenticity in news media.

Learn more about Project Origin by contacting us or viewing the video below:

IPTC Managing Director Brendan Quinn presented at the European Broadcasting Union’s Data Technology Seminar last week.

The DataTech Seminar, known in previous years as the Metadata Developers Network, brought over 100 technologists together in person in Geneva to discuss topics related to managing data at broadcasters in Europe and around the world.

Brendan spoke on Tuesday 21st March on a panel discussing Artificial Intelligence and the Media. Brendan used the opportunity to discuss IPTC’s current work on “do not train” signals in metadata, and on establishing best practices for how AI tools can embed metadata indicating the origin of their media.

The work of C2PA, Project Origin and Content Authenticity Initiative on addressing content provenance and tamper-evident media was also highlighted by Brendan during the panel discussion, as this relates to the prevalence of “deepfake” content that can be created by generative AI engines.

On Wednesday 22nd March, Brendan spoke in lieu of Paul Kelly, lead of the IPTC Sports Content Working Group about the IPTC SportSchema project. The session was called “IPTC Sport Schema – the next generation of sports data.” An evolution of IPTC’s SportsML standard, IPTC Sport Schema brings our 20 years of experience in sports data markup to the world of Knowledge Graphs and the Semantic Web. The specification is coming close to a version 1, so we were very proud to present it to some of the world’s top broadcasters and industry players.

The IPTC SportSchema site sportschema.org now includes comprehensive documentation of the ontology behind sports data model, examples of how it can be queried using SPARQL, example data files and instance diagrams showing how it can be used to represent common sports such as athletics, soccer, golf and hockey.

We look forward to discussing IPTC Sport Schema much more over the coming months, as we draw close to its general release.

EBU members can watch the full presentation at the EBU.ch site.

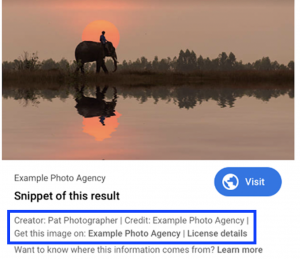

Our friends at CEPIC are running a webinar in conjunction with Google on the Licensable badge in search results. The webinar is TODAY, February 21st, so there are still a few hours left to join.

Register for free at https://www.eventbrite.com/e/google-webinar-image-seo-and-licensable-badge-tickets-532031278877

Google webinar: Image SEO and Licensable Badge

In this webinar, John Mueller, Google’s Search Advocate, will cover Image SEO Best Practices and Google’s Licensable Badge. For the Licensable Badge, John will give an overview of the product and implementation guidelines. There will also be time for a Q&A session.

One of the methods for enabling your licensing metadata to be surfaced in Google Search results is to embed the correct IPTC Photo Metadata directly into image files. The other is to use schema.org markup in the page hosting the image. We explain more in the Quick Guide to IPTC Photo Metadata on Google Images, but you can also learn about it by attending this webinar.

Tuesday 21st February 2023, at 4 PM – 5PM Central European Time

Topics covered include:

• Image SEO best practices

• Licensable badge in Google image search results

• Q&A

This is a free webinar open to all those interested, not just CEPIC or IPTC members.

We are happy to announce that IPTC’s work with C2PA, the Coalition for Content Provenance and Authority, continues to bear fruit. The latest development is that C2PA assertions can now include properties from both the IPTC Photo Metadata Standard and our video metadata standard, IPTC Video Metadata Hub.

Version 1.2 of the C2PA Specification describes how metadata from either the photo or video standard can be added, using the XMP tag for each field in the JSON-LD markup for the assertion.

For IPTC Photo Metadata properties, the XMP tag name to be used is shown in the “XMP specs” row in the table describing each property in the Photo Metadata Standard specification. For Video Metadata Hub, the XMP tag can be found in the Video Metadata Hub properties table under the “XMP property” column.

We also show in the example assertion how the new accessibility properties can be added using the Alt Text (Accessibility) field which is available in Photo Metadata Standard and will soon be available in a new version of Video Metadata Hub.

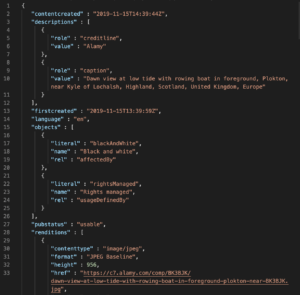

Alamy, a stock photo agency offering a collection of over 300 million images along with millions of videos, has recently launched a new Partnerships API, and has chosen IPTC’s ninjs 2.0 standard as the main format behind the API.

Alamy, a stock photo agency offering a collection of over 300 million images along with millions of videos, has recently launched a new Partnerships API, and has chosen IPTC’s ninjs 2.0 standard as the main format behind the API.

Alamy is an IPTC member via its parent company PA Media, and Alamy staff have contributed to the development of ninjs in recent years, leading to the introduction of ninjs 2.0 in 2021.

“When looking at a response format, we sought to adopt an industry standard which would aid in the communication of the structure of the responses but also ease integration with partners who may already be familiar with the standard,” said Ian Young, Solutions Architect at Alamy.

“With this in mind, we chose IPTCs news in JSON format, ninjs,” he said. “We selected version 2 specifically due to its structural improvements over version 1 as well as its support for rights expressions.”

Young continued: “ninjs allows us to convey the metadata for our content, links to the media itself and the various supporting renditions as well as conveying machine readable rights in a concise payload.”

“We’ve integrated with customers who are both familiar with IPTC standards and those who are not, and each have found the API equally easy to work with.”

Learn more about ninjs via IPTC’s ninjs overview pages, consult the ninjs User Guide, or try it out yourself using the ninjs generator tool.

Family Tree magazine has published a guide on using embedded metadata for photographs in genealogy – the study of family history.

Rick Crume, a genealogy consultant and the article’s author, says IPTC metadata “can be extremely useful for savvy archivists […] IPTC standards can help future-proof your metadata. That data becomes part of the digital photo, contained inside the file and preserved for future software programs.”

Crume quotes Ken Watson from All About Digital Photos saying “[IPTC] is an internationally recognized standard, so your IPTC/XMP data will be viewable by someone 50 or 100 years from now. The same cannot be said for programs that use some proprietary labelling schemes.”

Crume then adds: “To put it another way: If you use photo software that abides by the IPTC/XMP standard, your labels and descriptive tags (keywords) should be readable by other programs that also follow the standard. For a list of photo software that supports IPTC Photo Metadata, visit the IPTC’s website.“

“[IPTC] is an internationally recognized standard, so your IPTC/XMP data will be viewable by someone 50 or 100 years from now”

The article goes on to recommend particular software choices based on IPTC’s list of photo software that supports IPTC Photo Metadata. In particular, Crume recommends that users don’t switch from Picasa to Google Photos, because Google Photos does not support IPTC Photo Metadata in the same way. Instead, he recommends that users stick with Picasa for as long as possible, and then choose another photo management tool from the supported software list.

Similarly, Crume recommends that users should not move from Windows Photo Gallery to the Windows 10 Photos app, because the Photos app does not support IPTC embedded metadata.

Crume then goes on to investigate popular genealogy sites to examine their support for embedded metadata, something that we do not cover in our photo metadata support surveys.

The full article can be found on FamilyTree.com.

The IPTC took part in a panel on Diversity and Inclusion at the CEPIC Congress 2022, the picture industry’s annual get-together, held this year in Mallorca Spain.

Google’s Anna Dickson hosted the panel, which also included Debbie Grossman of Adobe Stock, Christina Vaughan of ImageSource and Cultura, and photographer Ayo Banton.

Unfortunately Abhi Chaudhuri of Google couldn’t attend due to Covid, but Anna presented his material on Google’s new work surfacing skin tone in Google image search results.

Brendan Quinn, IPTC Managing Director participated on behalf of the IPTC Photo Metadata Working Group, who put together the Photo Metadata Standard including the new properties covering accessibility for visually impaired people: Alt Text (Accessibility) and Extended Description (Accessibility).

Brendan also discussed IPTC’s other Photo Metadata properties concerning diversity, including the Additional Model Information which can include material on “ethnicity and other facets of the model(s) in a model-released image”, and the characteristics sub-property of the Person Shown in the Image with Details property which can be used to enter “a property or trait of the person by selecting a term from a Controlled Vocabulary.”

Some interesting conversations ensued around the difficulty of keeping diversity information up to date in an ever-changing world of diversity language, the pros and cons of using controlled vocabularies (pre-selected word lists) to cover diversity information, and the differences in covering identity and diversity information on a self-reported basis versus reporting by the photographer, photo agency or customer.

It’s a fascinating area and we hope to be able to support the photographic industry’s push forward with concrete work that can be implemented at all types of photographic organisations to make the benefits of photography accessible for as many people as possible, regardless of their cultural, racial, sexual or disability identity.